Mar. 29, 2024

Controlling Robots Remotely with Sketches!?Exploring New Interactions Between Humans and Robots

Toyota Motor Corporation's Frontier Research Center (hereafter Toyota) is dedicated to researching robots that coexist with humans, with the aim of creating Mobility for All. With the advancement of machine learning techniques, such as natural language processing and image generation, which can be applied to various tasks, the performance of individual robots and entire robot systems has improved rapidly. We interviewed Iwanaga and Tanada, who are conducting research on interfaces that allow operators to give more intuitive instructions to robots for cooperative and collaborative interaction between humans and robots.

Combining Autonomy and Remote Control in a Robot System Proposed Since 2012

―When it comes to robot research, people tend to focus on the functionality and performance of the robot itself. What was the motivation for starting remote control research?

- Iwanaga

- In 2012, Toyota announced the research platform HSR (Human Support Robot) for life support robots*1 and has been conducting collaborative research with researchers from around the world*2. (Its operation was transferred to the Robotics Society of Japan in April 2023. Currently 57 organizations from 10 countries participate.) We have also been conducting field trial in homes and facilities. From the beginning of HSR development, Toyota believed that it was important to create a robot system that combines the autonomous functions of the robot with remote control by humans, and we have been working on it. By allowing humans to intervene in the system through remote control, it becomes possible to handle unknown environments and objects that autonomous robots are not good at with human cognitive judgment. In addition, in the Tokyo 2020 Paralympic Games, Toyota and Toyota Loops jointly provided services by remotely operating multiple HSRs from Toyota City, Aichi Prefecture to the stadium in Tokyo*3. I was deeply moved when the operator, who had worked with us to develop the robot, expressed her surprise and excitement, saying, "In terms of career choices for people with disabilities, I had given up on anything other than desk jobs, such as clerical work, and I had never thought that there was a way to serve customers through a robot," then I realized that remote control of robots is a technology that can expand the possibilities of operators.

―What are the challenges in remote control of robots?

- Iwanaga

- Robots have many functions, including wheels for movement, arms and hands composed of multiple joints, and cameras for ensuring visibility. Operators control the robot while looking at the live video of the robot in a remote location through a screen, so they are actually performing many operations based on limited information. The key to research is how to make it easy for operators to move the robot as they intend.

―I see. It would be great if anyone can move the robot "easily" and "as intended" without practicing for a long time, expanding the possibilities of virtual movement.

- Iwanaga

- That's right. We believe that the value of remote control is enhanced when the operator can move the robot as he or she wishes, rather than only being able to select and execute predetermined scenarios or programmed movements. At the same time, we do not want the operator to bear a heavy burden. Of course, the appropriate interface will vary depending on the use case, but this research, which began in 2021, is based on the concept of a sketch interface that allows anyone, anywhere, anytime to easily and as intended remotely control the robot and direct it to perform physical tasks (Video 1).

- Video 1 Explanation of the operation of the sketch interface

Overview of the Sketch Interface

―First, what does it mean to control the robot with sketches?

- Iwanaga

- When people want to convey something to someone, we often use hand-drawn shapes or pictures. The idea is that this can also be applied as a means for anyone to easily convey the intentions to robots (patent application filed in 2019*4). In fact, there have been studies that use hand-drawn lines on a flat map to indicate the robot's planar movement path*5, but there were no examples of using a two-dimensional sketch interface to control a robot arm that moves in three dimensions at the beginning of the project (according to our research). Recently, there have been studies attempting to represent the trajectory of a robot arm with a sketch and acquire generalization capabilities in learning systems for autonomous robots*6. This has made us realize the importance of conveying the operator's intentions through not only language but also sketches.

―What are the specific advantages of conveying intentions through sketches?

- Iwanaga

- Imagine grasping and carrying objects in your daily life. People unconsciously know which part of an object is best to grasp and adjust their grip according to the situation and their needs. For example, if it's a toothbrush, we grasp the handle, and if a plate is dirty, we grasp it without touching there, and when grasping a baseball cap, it's easier to hang it on a hat rack if we grasp the brim. It's similar to the concept of affordance*7, and the advantage of sketches is that people can easily convey what they unconsciously feel they want to do and what would be better for the robot through rough lines and symbols.

Implementation of Prototypes and Identified Challenges through User Evaluations

―How did you achieve it from a technical standpoint?

- Iwanaga

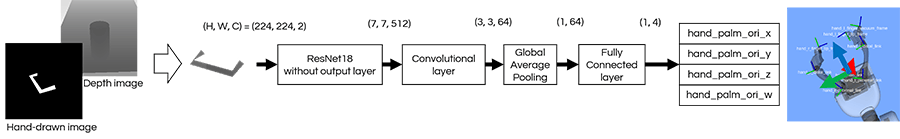

- First, we developed three basic functions: changing the robot's viewpoint, moving the robot, and grasping objects (Video 2, 3, and 4). First, "changing the robot's viewpoint" allows the operator to control the orientation of the camera installed on the robot while looking at only the image captured by the robot's onboard camera on the tablet screen (hereafter camera image). We implemented a viewpoint change function that allows the operator to move a stylus freely on the tablet screen to change the direction of the robot's camera. Next, for "moving the robot," the operator draws a line on the camera image of the ground to indicate the desired path for the robot to move. Then, the system extracts three-dimensional position data from the RGBD sensor (a sensor that can acquire color and depth images) information based on the line drawn on the image and gives the robot a movement instruction. Lastly, for "grasping objects," we faced the greatest challenge. First, the operator draws a "c-shaped" symbol on the screen around the object they want to grasp, simulating the shape of the robot's hand. This allows us to extract the position and orientation of the "c-shaped" symbol from the image and find the boundary points between the object and the environment using depth information obtained from the RGBD sensor, thereby calculating the position to grasp the three-dimensional object. As for the gripping direction, we created training data on a simulator by fixing the environment and the object, and implemented a learning model to infer it (Figure 1).

-

- Video 2 Changing the viewpoint

-

- Video 3 Moving the robot

-

- Video 4 Grasping objects

-

- Figure 1 Learning model to estimate the gripping direction

―What were the feedback and impressions from users who actually tried it?

- Iwanaga

- We asked ten participants in their 20s to 50s at Toyota to perform a remote operation task using the sketch interface to pick up three cans*8. The results showed that the operation itself was intuitive and easy, and the movement of the robot matched their expectations once it started. However, we discovered two major challenges regarding the grasping function. The first challenge is that operators cannot give instructions "freely" as the literal meaning suggests. The rules for drawing instructions, designed to make the system easier to understand the operator's instructions, ended up becoming constraints for the users. The second challenge is how to prioritize between the operator's intention and the feasibility of the robot's inferred movement, in other words, "arbitration." If the robot moves based on incorrect instructions given by the operator, it can negatively affect the success rate and completion time of the task. We realized the importance of respecting the operator's intention while utilizing the robot's autonomous functions to enhance the overall performance of the system.

Techniques for Interpreting the Operator's Intentions Through Sketches

―How are you addressing the first challenge of operators not being able to give instructions freely?

- Tanada

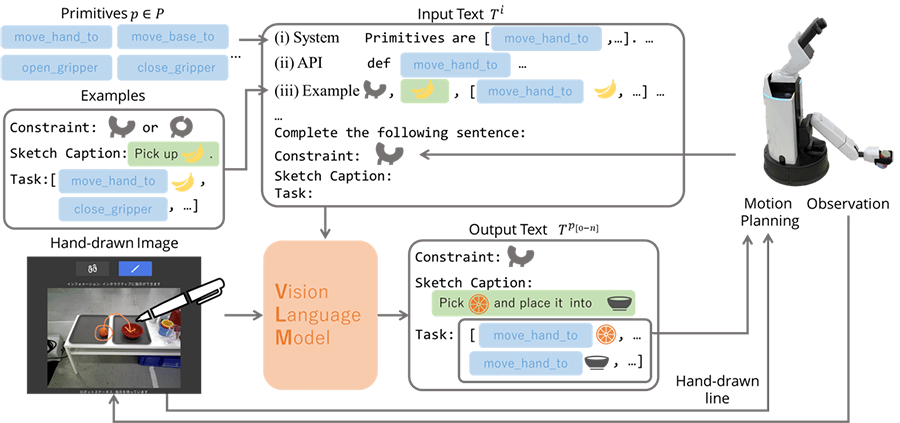

- We are attempting to solve this challenge by utilizing the latest generative AI techniques. In recent years, there have been Vision-Language Models (hereafter VLMs), which extend language models like ChatGPT to not only natural language but also image inputs, showing high performance in tasks such as image captioning and visual recognition*9. Therefore, we constructed a model that interprets the operator's instructions and generates robot movements by inputting images representing the instructions and predefined prompts (text provided as input to the model) (Figure 2). Please watch Video 1 again.

-

- Figure 2 Intention interpretation model for sketches using VLM

―What can you achieve by using VLM?

- Tanada

- Previously, it was not possible to give instructions for "moving the robot" and "grasping objects" simultaneously, and separate instructions were required for each action by switching the instruction input screen. With VLM, it can interpret all instructions at once. For example, if the operator draws a line on the ground on the displayed camera image, VLM understands it as a movement instruction for the robot. If the operator draws a line from an apple to a bowl, the robot understands it as an instruction to move to where the apple is, grasp it, and put it in the bowl. This means that combinations of multiple tasks, such as moving, grasping, and placing objects, can be expressed in a single instruction. This technology enables a smarter robot that can interpret the operator's intentions more flexibly than before.

―It sounds like there are interesting techniques and innovations, such as prompts.

- Tanada

- Thank you. We plan to present these results at the 38th Annual Conference of the Japanese Society for Artificial Intelligence, 2024 (May 28-31, hosted by the Japanese Society for Artificial Intelligence).

Controlling Robots with "Semi-Autonomy"

―How do you plan to achieve the arbitration between the operator's intentions and the robot's autonomous functions?

- Iwanaga

- The idea of Shared Control, which is the sharing of control between humans and robots, has been researched for a long time*10, and I believe it is essential for cooperation and collaboration between humans and robots. We will continue to take on the challenge of constructing robot systems that comprehensively judge based on the environment, situation, and human intentions. For example, regarding the grasping function, learning models that output multiple candidate positions and orientations of the robot's hand to physically grasp an object based on sensor information have been published. We consider selecting the one that is closest to the operator's intention as one form of arbitration. Also, the model's response may not always match the operator's intention. Therefore, we plan to research mechanisms in which the operator interacts with the robot through the interface to lead to answers that are closer to the operator's intentions, while also respecting the autonomous functions of the robot and enhancing the overall performance of the system.

―Both the development of autonomous functions and remote-control technologies that cooperate with operators are important in robot research.

- Tanada

- Yes, I hope we can create a cycle of research that contributes to the development of both aspects. For example, if our developed interface allows robots to be easily and accurately controlled as intended by the operator, it may also contribute to collecting learning data, which is essential for improving the performance of autonomous functions. Since Toyota has many colleagues who focus on improving the performance of autonomous robots, I would like to conduct research while receiving feedback and opinions from them.

Future Aspirations

- Iwanaga

- We are seeing more and more opportunities to encounter security, transportation, and serving robots, making remote control of robots an increasingly familiar technology. In the future, we actively aim to submit papers to international conferences and contribute to the implementation of robots in society through our technological advancements.

- Tanada

- We would be happy if many people show interest, and we can build a network of colleagues.

Authors

Yuka Iwanaga

Assistant Manager, Collaborative Robotics Research Group, R-Frontier Div., Frontier Research Center, Toyota Motor Corporation

Kosei Tanada

Researcher, Collaborative Robotics Research Group, R-Frontier Div., Frontier Research Center, Toyota Motor Corporation

References

| *1 | TMC Develops Independent Home-living-assistance Robot Prototype |

|---|---|

| *2 | Frontier Research: Engaging in Co-Creation Robot Research with Researchers around the World |

| *3 | Toyota Times: HSR, the Omotenashi Robot: The Technology and People that Supported the Tokyo 2020 (Part 2) |

| *4 | Yamamoto, Takashi. Remote control system and remote control method. US-11904481-B2. 2024-02-20. |

| *5 | D. Sakamoto et al. Sketch and Run: A Stroke-based Interface for Home Robots. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, CHI '09, page 197-200, 2009 |

| *6 | Jiayuan Gu, Sean Kirmani, Paul Wohlhart, Yao Lu, Montserrat Gonzalez Arenas, Kanishka Rao, Wenhao Yu, Chuyuan Fu, Keerthana Gopalakrishnan, Zhuo Xu, Priya Sundaresan, Peng Xu, Hao Su, Karol Hausman, Chelsea Finn, Quan Vuong, and Ted Xiao. Rt-trajectory: Robotic task generalization via hindsight trajectory sketches, 2023 |

| *7 | J. J. Gibson (1966). The Senses Considered as Perceptual Systems. Allen and Unwin, London. |

| *8 | Development and Evaluation of an Interface for Communicating Human Intentions to Robots through 2D Handwritten Instructions: A Pilot Study on Object Retrieval Task Instruction Methods. Yuka Iwanaga, Takemitsu Mori, Masayoshi Tsuchinaga, Takashi Yamamoto. Proceedings of the 41st Annual Conference of the Robotics Society of Japan, Paper No. 1J1-03, 2023. (Only in Japanese.) |

| *9 | Rana, K., Haviland, J., Garg, S., AbouChakra, J., Reid, I., and Suenderhauf, N.: SayPlan: Grounding Large Language Models using 3D Scene Graphs for Scalable Robot Task Planning, 2023 |

| *10 | M. Selvaggio, M. Cognetti, S. Nikolaidis, S. Ivaldi and B. Siciliano, "Autonomy in Physical Human-Robot Interaction: A Brief Survey," in IEEE Robotics and Automation Letters, vol. 6, no. 4, pp. 7989-7996, Oct. 2021 |

Contact Information (about this article)

- Frontier Research Center

- frc_pr@mail.toyota.co.jp